Integrate Large Language Models (GPT‑4, Gemini, Claude) and classical ML into your security operations. Learn to automate alert triage, generate detection rules, simulate intelligent adversaries, and protect your organization from AI‑powered threats — all while understanding the unique risks of GenAI.

✔ 60% hands-on labs • 15+ AI security projects • Build your own SOC copilot • Red‑team vs. Blue‑team AI simulations • Capstone: Autonomous security agent • 24/7 cloud lab access.

LLM prompt engineering for security, AI‑based log analysis, anomaly detection (isolation forest, autoencoders), GenAI for phishing generation/defense, AI security guardrails, adversarial ML, OWASP Top 10 for LLMs, and secure RAG pipelines.

Basic knowledge of Python and cybersecurity fundamentals (threats, logs, network) is recommended. No prior AI/ML experience required — we start from basics.

Build a GenAI‑powered SIEM assistant, create an automated phishing detection system, develop an LLM‑based deception honeypot, and implement a RAG chatbot for security documentation.

Mock tests, resume review, portfolio building, and interview preparation for AI Security Engineer, SecOps Automation Engineer, and Cyber AI Specialist roles.

Tailored AI security upskilling for teams and enterprises

Choose from AI defense, offensive AI, or AI governance tracks.

Hands-on labs with secure LLM playgrounds and Jupyter notebooks.

Monitor progress and skill gaps with detailed analytics.

Volume discounts for teams of 10+, plus pay-as-you-go options.

Dedicated AI security mentors to assist your learners anytime.

Single point of contact for seamless training delivery.

Get a custom quote for your organization's GenAI security training.

From LLM Security to Autonomous Defense Agents

Master prompt injection prevention, output filtering, and secure prompt design. Build system prompts that resist adversarial manipulation.

Use transformer models and LLMs to parse massive log volumes, detect anomalies, and generate natural language incident summaries.

Create dynamic, AI‑generated decoy content and realistic fake infrastructure to lure attackers.

Understand evasion attacks, data poisoning, and model extraction. Defend with adversarial training and robust ML pipelines.

Build safe GenAI applications that retrieve internal security knowledge without leaking sensitive data.

Develop autonomous agents that triage alerts, execute playbooks, and escalate with human‑in‑the‑loop.

Ideal Candidates for AI/GenAI for Cybersecurity Certification

Designed for cybersecurity and IT professionals with basic Python skills and security awareness. This program bridges AI technology with security operations, making you a pioneer in one of the fastest‑growing fields. Average salaries for AI Security Engineers in India range from ₹12 Lakhs to ₹30+ Lakhs per year.

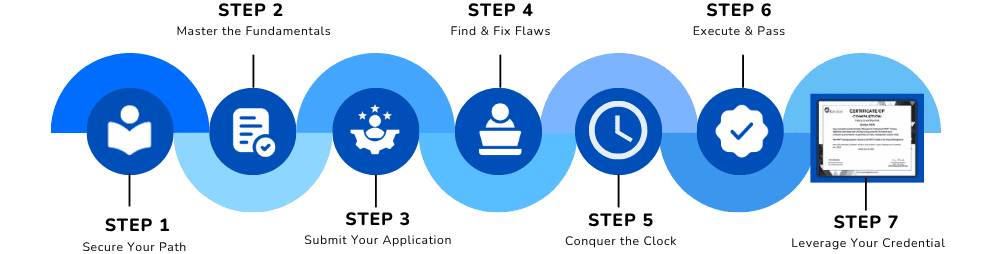

Your Step‑by‑Step Path to AI‑Powered Defense

Learn core AI/ML concepts, Python for data science, and apply them to security datasets like logs and network flows.

What You Need Before You Start

Objective: To certify your ability to design, implement, and defend AI‑powered security solutions. Candidates should have:

Ability to write simple scripts, use pandas and REST APIs. We provide a Python refresher module.

Understanding of common threats, log formats (Syslog, JSON), and basic incident response steps.

No prior ML experience required — we start from data preprocessing and model training for security use cases.

Comprehensive AI cybersecurity modules – from ML basics to GenAI security agents

Supervised vs. unsupervised learning, feature engineering. Work with Pandas, Scikit‑learn on security logs.

Implement Isolation Forest, One‑Class SVM, and Autoencoders to detect rare security events.

Prompt engineering, API usage, and open‑source models. Understand tokenization and embeddings.

Use LLMs to parse alerts, enrich incidents, and generate human‑readable executive summaries.

Attack techniques: indirect injection, role‑play bypass. Defenses: input sanitization, output filtering, moderation APIs.

Build retrieval‑augmented generation systems that respect access controls and prevent data leakage.

Generate convincing spear‑phishing emails, realistic lures, and pretexts using GenAI.

Use LLMs to mutate malware, evade signatures, and create dynamic exploit variations.

Craft adversarial examples, manipulate training data, and understand model stealing.

Implement adversarial training, input preprocessing, and certified robustness techniques.

Use LangChain, AutoGen, or CrewAI to create autonomous triage and containment agents.

Integrate with SIEMs, ticketing systems, and orchestration workflows.

Create realistic fake documents, databases, and network services to trap attackers.

Use LLMs to converse with intruders in honeypots, wasting their time and gathering intelligence.

Understand compliance requirements for AI in security operations.

Apply techniques to train models without exposing sensitive data.

Identify synthetic media, voice cloning, and AI‑generated disinformation.

Use LLMs to correlate disparate data sources and uncover stealthy intrusions.

Build a fully autonomous agent that ingests logs, detects threats, explains decisions, and recommends containment actions.

Test agent efficacy; review LLM security patterns and adversarial ML concepts for final assessment.

Lifetime Access

Real AI Security Projects

Mentor Support

Practice Assignments

Certificate Preparation

Join 9,800+ forward‑looking defenders who have mastered AI/GenAI for cybersecurity. Be part of the next generation of security professionals who defend faster, smarter, and at scale.

✅ Limited seats available for the upcoming batch • EMI options available • Includes LLM API credits